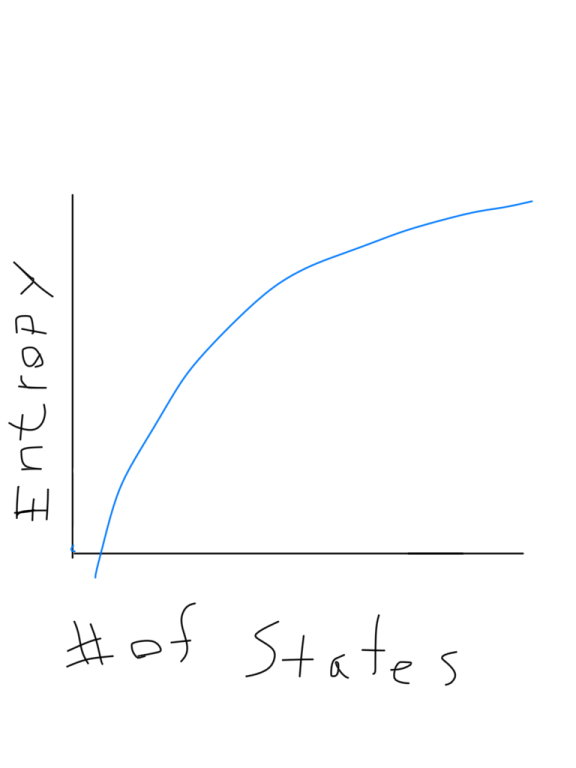

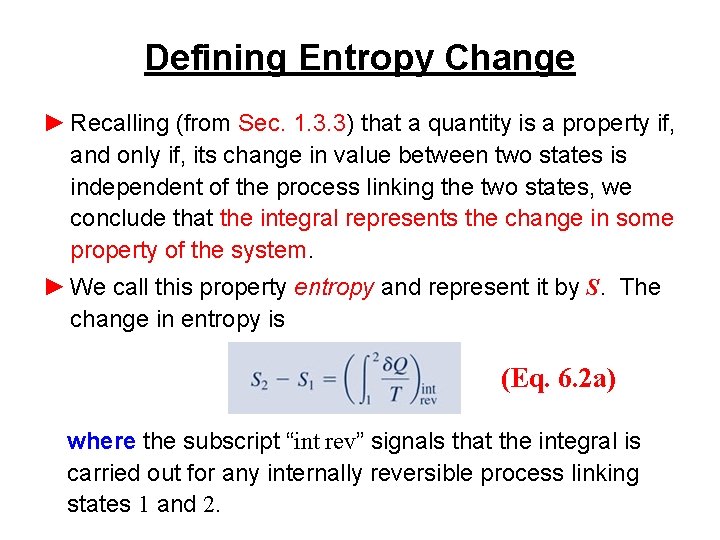

In this context, a the change in entropy can be described as the heat added per unit temperature and has the units of Joules/Kelvin (J/K) or eV/K. For the case of an isothermal process it can be evaluated simply by ΔS = Q/T. It can be integrated to calculate the change in entropy during a part of an engine cycle. This is often a sufficient definition of entropy if you don't need to know about the microscopic details. The relationship which was originally used to define entropy S is dS = dQ/T Download scientific diagram The entropy per atom in units of Boltzmanns constant kB S/NkB versus the temperature T K, for 4 He gas, at number density. This is a way of stating the second law of thermodynamics. As a large system approaches equilibrium, its multiplicity (entropy) tends to increase. You can with confidence expect that the system at equilibrium will be found in the state of highest multiplicity since fluctuations from that state will usually be too small to measure. The multiplicity for ordinary collections of matter is inconveniently large, on the order of Avogadro's number, so using the logarithm of the multiplicity as entropy is convenient.įor a system of a large number of particles, like a mole of atoms, the most probable state will be overwhelmingly probable. The forming more moles of a product than reactant increases entropy. The fact that the logarithm of the product of two multiplicities is the sum of their individual logarithms gives the proper kind of combination of entropies. Entropy is represented by Joules per Kelvin or J/K. The entropy of the combined systems will be the sum of their entropies, but the multiplicity will be the product of their multiplicities. It also gives the right kind of behavior for combining two systems. The logarithm is used to make the defined entropy of reasonable size. It equals the total entropy (S) divided by the total mass (m). This is Boltzmann's expression for entropy, and in fact S = klnΩ is carved onto his tombstone! (Actually, S = klnW is there, but the Ω is typically used in current texts (see Wikipedia)).The k is included as part of the historical definition of entropy and gives the units joule/kelvin in the SI system of units. The specific entropy (s) of a substance is its entropy per unit mass. One way to define the quantity "entropy" is to do it in terms of the multiplicity. Qualitatively, entropy is simply a measure how much the energy of atoms and molecules become more spread out in a process and can be defined in terms of statistical probabilities of a system or in terms of the other.

The multiplicity for seven dots showing is six, because there are six arrangements of the dice which will show a total of seven dots. Entropy is a state function that is often erroneously referred to as the 'state of disorder' of a system. The multiplicity for two dots showing is just one, because there is only one arrangement of the dice which will give that state. The relative entropy, D(pkqk), quantifies the increase in the average number of units of information needed per symbol if the encoding is optimized for.

In throwing a pair of dice, that measurable property is the sum of the number of dots facing up. Here a "state" is defined by some measurable property which would allow you to distinguish it from other states. That is to say, it is proportional to the number of ways you can produce that state. entropy, the measure of a system’s thermal energy per unit temperature that is unavailable for doing useful work. The probability of finding a system in a given state depends upon the multiplicity of that state. I'm currently trying to work out if there's a connection between this physical example and the mathematical one I've just presented.Entropy Entropy as a Measure of the Multiplicity of a System P_1 = \frac$.įor a more physical example of a one-nat quantity, see this question by Mark Eichenlaub. Last updated Simple Measurement of Enthalpy Changes of Reaction ‘Disorder’ in Thermodynamic Entropy Entropy is a state function that is often erroneously referred to as the 'state of disorder' of a system. For example, Wolfram|Alpha tells me that a discrete three-state system has an entropy of one nat if That's not to say you can't contrive an example. They're quite similar to radians in that respect: one rarely if ever finds oneself considering an angle of exactly one radian, but we use them anyway because they simplify the mathematics.

At least I've never seen such a thing arise in any sensible physical or mathematical situation. An integer number of nats is not generally speaking a meaningful thing.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed